What Is an AI Voice Agent? A 2026 Explainer

Article Summary:An AI voice agent is a conversational system that handles customer requests through natural dialogue, replacing rigid IVR menus. In 2026, the voice AI market reached $22 billion, with 88 percent of contact centers using AI.

Table of contents for this article

- 1. Defining the AI Voice Agent

- 1.1 What Is an AI Voice Agent?

- 1.2 The Evolution from IVR to Agentic Voice

- 2. AI Voice Agent vs. IVR vs. Chatbot: Key Differences

- 2.1 AI Voice Agent vs. Traditional IVR

- 2.2 AI Voice Agent vs. Text Chatbot

- 2.3 Voice Bot vs. Voice Agent

- 3. Technical Architecture: How AI Voice Agents Work

- 3.1 The Four-Technology Pipeline

- 3.1.1 Automatic Speech Recognition (ASR)

- 3.1.2 Natural Language Understanding (NLU) and Large Language Models (LLMs)

- 3.1.3 Decision Engine and Agentic Execution

- 3.1.4 Text-to-Speech (TTS)

- 3.2 Retrieval-Augmented Generation (RAG) and Knowledge Grounding

- 3.3 Production Constraints and Latency

- 4. Mainstream Application Scenarios

- 4.1 Tier-1 Issue Resolution and High-Volume Inquiry Handling

- 4.2 After-Hours Coverage and 24/7 Availability

- 4.3 Scalability for Demand Spikes

- 4.4 Proactive Outbound Notifications

- 4.5 Warm Escalation with Full Context

- 4.6 Multilingual and Cross-Border Support

- 5. AI Voice Agent Selection Checklist

- 5.1 Evaluation Dimension 1: Accuracy Under Real-World Conditions

- 5.2 Evaluation Dimension 2: Integration Depth with Business Systems

- 5.3 Evaluation Dimension 3: Latency and Uptime

- 5.4 Evaluation Dimension 4: Security and Compliance

- 5.5 Evaluation Dimension 5: Total Cost of Ownership and ROI

- 6. FAQ

- Q1. How much does deploying an AI voice agent typically cost compared to adding human agents?

- Q2. Will AI voice agents replace all customer service agents?

- Q3. Can AI voice agents understand different accents and languages?

- 》》Click to start your free trial of voice chatbot, and experience the advantages firsthand.

In 2026, AI voice agents have transformed the phone channel from a source of customer frustration into a platform for natural, conversational, and efficient resolution. Organizations that deploy them well will not just answer calls faster. They will resolve more calls, handle surging volumes without hiring sprees, and deliver the voice experience that modern customers now expect as the baseline. For businesses ready to move beyond the IVR, Udesk offers a proven AI voice agent solution built on enterprise-grade architecture and integrated into a unified omnichannel customer service platform.

1. Defining the AI Voice Agent

1.1 What Is an AI Voice Agent?

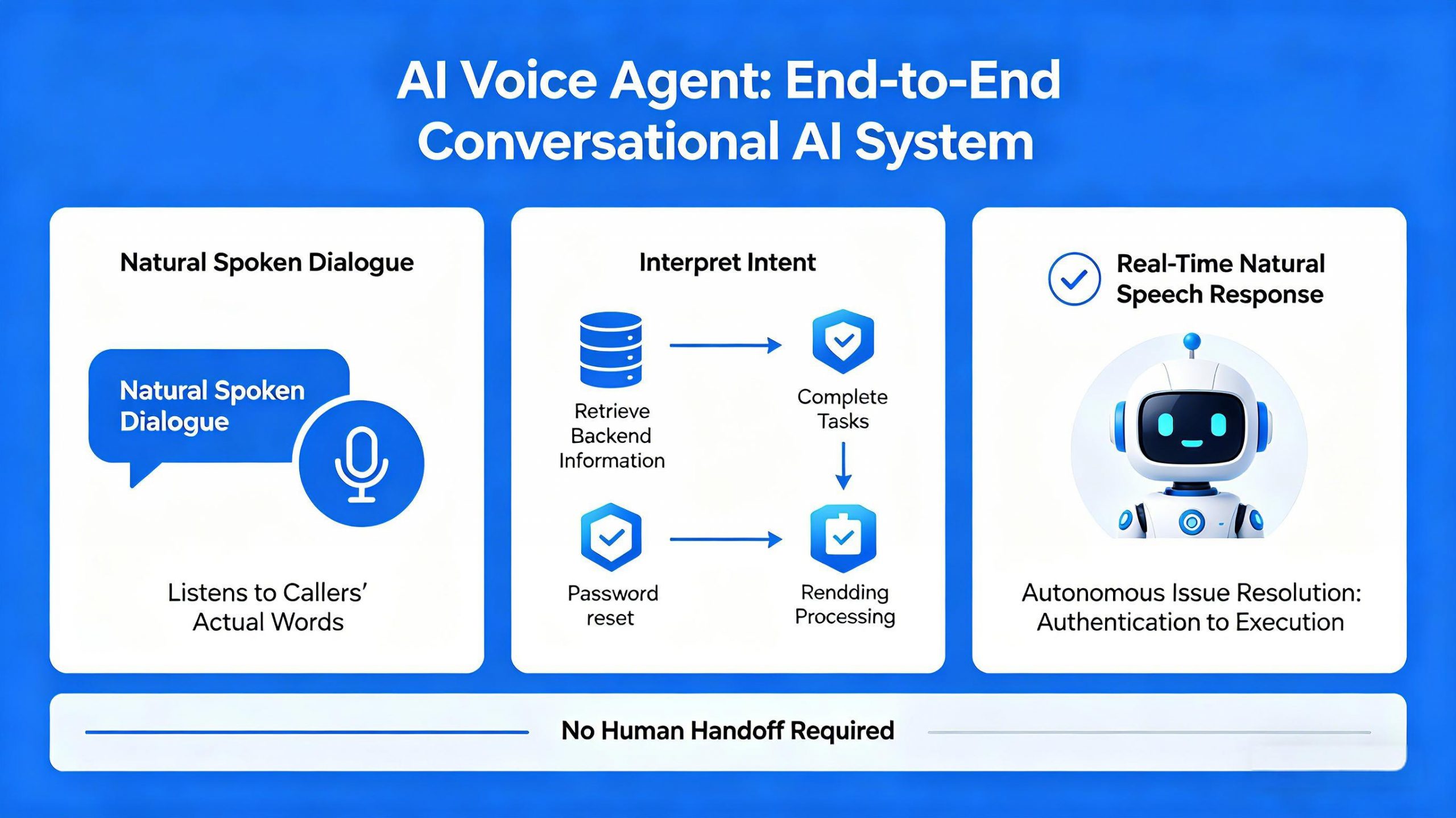

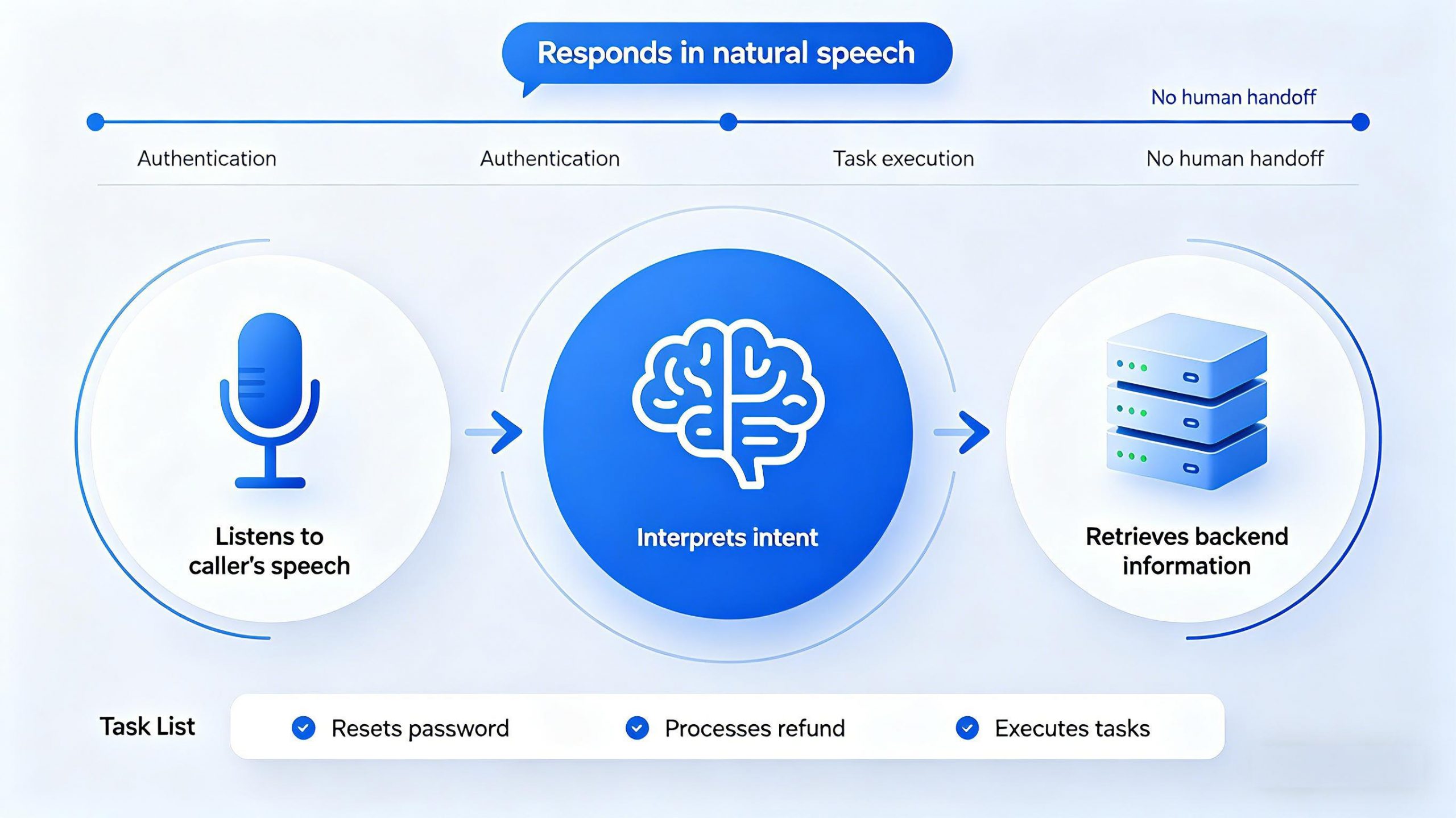

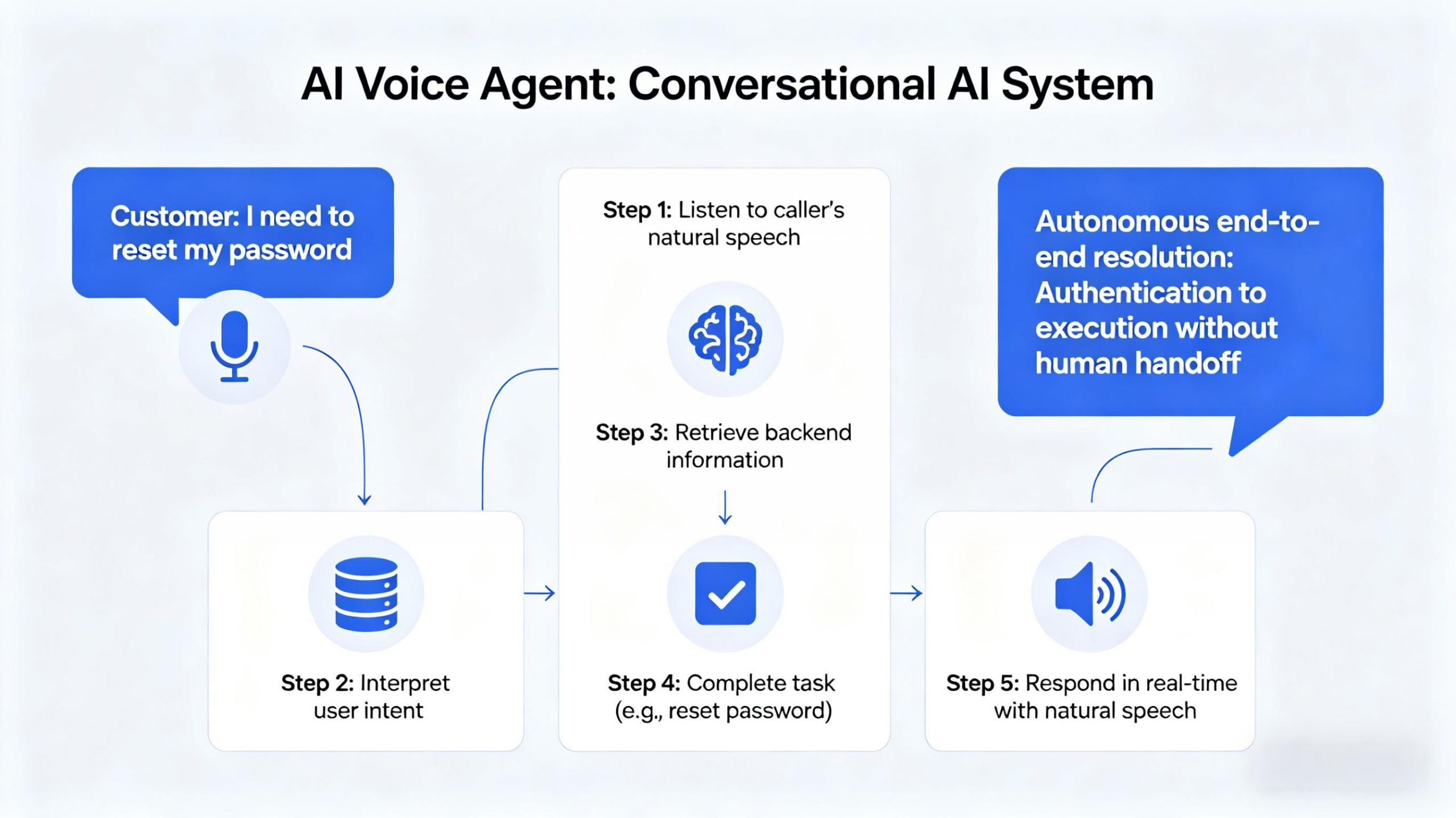

An AI voice agent is a conversational AI system that handles customer requests through natural, spoken dialogue instead of rigid menu trees. It listens to what callers actually say, interprets their intent, retrieves relevant information from backend systems, completes tasks such as resetting passwords or processing refunds, and responds in real time using natural-sounding speech. Modern AI voice agents can resolve end-to-end issues autonomously, from authentication to execution, without requiring human handoff.

1.2 The Evolution from IVR to Agentic Voice

The journey from legacy IVR to AI voice agents represents a fundamental shift in design philosophy. Traditional IVR systems forced callers through fixed decision trees using keypad presses and keyword spotting. Callers navigated a maze of menus, pressing numbers to reach a department, not a resolution. The system handled the call, but a human still had to handle the problem.

Agentic Voice inverts that model entirely. An AI voice agent authenticates the caller, retrieves the account, applies business policies, executes the transaction, confirms the outcome, and closes the loop—all inside a single natural conversation. According to Gartner, agentic AI is projected to autonomously resolve 80 percent of common customer service issues by 2029. This is not an incremental improvement; it is a categorical shift from managing calls to completing work.

For organizations ready to make this shift, Udesk provides an AI-native voice agent solution integrated into a unified omnichannel customer service platform. Udesk supports voice bots for process control across inbound and outbound scenarios, with sub-1-second response times and highly natural text-to-speech synthesis. The platform connects to over 20 communication channels in a single workspace.

2. AI Voice Agent vs. IVR vs. Chatbot: Key Differences

Understanding what an AI voice agent is requires knowing what it is not. Three terms often create confusion, and clarifying the distinctions is essential for procurement and expectation-setting.

2.1 AI Voice Agent vs. Traditional IVR

The difference is fundamental: IVR is a menu system; a voice agent is a conversation. Legacy IVR relies on rigid, sequential menus where callers press numbers or speak simple keywords. It captures limited isolated data points with no ability to handle phrasing the system has never seen before. An AI voice agent, by contrast, uses natural language understanding to comprehend meaning, adapt dynamically, and handle complex reasoning.

A legacy IVR typically contains only 20 to 30 percent of calls before escalating, because anything outside a narrow menu gets punted to a human. A well-tuned AI voice agent contains 70 to 95 percent of calls within the first 90 days, because callers can describe their issue in their own words and get it resolved in one turn of conversation. Nearly 80 percent of CX leaders now believe voice AI is ushering in a new era of seamless problem-solving, leaving robotic IVRs behind.

2.2 AI Voice Agent vs. Text Chatbot

A text chatbot operates over text channels such as web chat, WhatsApp, or messaging apps. It benefits from structured input and can handle typed interactions effectively. A voice agent operates over voice—phone calls, audio streams, and voice-enabled channels. It must handle background noise, regional accents, overlapping speech, natural interruptions, and real-time latency. Text-based systems skip the hardest technical problems: converting audio to text, detecting when a caller's turn is finished, and generating spoken responses with appropriate pacing.

Many enterprises deploy both: voice agents for phone interactions and text chatbots for web and messaging channels. They are complementary, not competing.

2.3 Voice Bot vs. Voice Agent

In 2026, these terms are often used interchangeably, but small nuances exist. A voice bot may have a narrower scope, handling transactional or single-domain tasks. A voice agent implies broader autonomy: navigating multiple backend systems, making decisions, and transferring calls intelligently. For the purposes of this guide, both terms describe AI systems managing voice conversations at scale.

3. Technical Architecture: How AI Voice Agents Work

3.1 The Four-Technology Pipeline

Every production AI voice agent runs through the same pipeline of four core technologies working in sequence. If one component fails, the caller and the outcome will both feel the impact.

3.1.1 Automatic Speech Recognition (ASR)

ASR converts the caller's spoken audio into text in real time. It is the entry point of the pipeline. Modern ASR uses streaming architectures that produce partial transcriptions continuously, allowing the system to begin reasoning before the caller finishes speaking. Accent coverage, domain vocabulary, background noise handling, and Word Error Rate (WER) all matter here. A WER above 20 percent makes a voice agent functionally unusable in production.

3.1.2 Natural Language Understanding (NLU) and Large Language Models (LLMs)

Once speech is transcribed, NLU and LLMs interpret what the caller actually wants. Modern platforms use LLMs to handle open-ended phrasing, carry conversation context across multiple turns, and perform multi-step reasoning. This is the shift from matching a keyword to understanding the intent behind any phrasing. LLM inference, not speech recognition, is typically the largest latency bottleneck, averaging approximately 670 milliseconds per generation.

3.1.3 Decision Engine and Agentic Execution

The decision engine determines what action to take based on the understood intent. It may retrieve customer data, check business policies, execute transactions, or decide to escalate to a human. Agentic execution means the AI does not just suggest actions—it performs them. It authenticates the caller, pulls account history, applies refund policies, updates CRM records, and closes the loop within a single conversation. Enterprise platforms connect directly to CRM systems, ticketing tools, and ITSM platforms to execute multi-step workflows.

3.1.4 Text-to-Speech (TTS)

TTS converts the system's generated response back into spoken audio with natural pacing and appropriate emotional tone. Modern TTS has become natural enough that customers frequently cannot distinguish AI from human speech in live interactions. Key differentiators include voice quality, language and dialect support, and the ability to handle interruptions naturally. End-to-end round-trip latency (ASR to NLU to decision to TTS) typically ranges from 450 milliseconds to 1.5 seconds in production systems.

3.2 Retrieval-Augmented Generation (RAG) and Knowledge Grounding

In enterprise deployments, AI voice agents cannot rely solely on the LLM's training data. They need access to verified, up-to-date company information. RAG grounds LLM responses in retrieved company data before generating output, preventing hallucinations and ensuring the AI is actually right instead of just sounding confident without factual backing. For support scenarios, RAG pulls from knowledge bases, product documentation, and policy manuals. For transactional scenarios, it retrieves real-time customer account data from integrated backend systems.

3.3 Production Constraints and Latency

As of 2026, production AI voice agents often still sit above the research conversational threshold. While human conversation feels natural with roughly 200-millisecond response windows, enterprise-scale systems consistently achieve higher latency: complete round-trip latency often ranges from 450ms to 1.5 seconds. This 450–1,500ms gap is where platform selection, architecture choices, and real-world network conditions determine whether a call feels magical or mechanical.

Udesk addresses these production constraints with sub-1-second response times for its AI voice bots, powered by a distributed cloud-native architecture. The platform supports multiple mainstream large language models including OpenAI and DeepSeek for voice bot process control, and its RAG-based architecture delivers high intent recognition accuracy with low inference latency.

4. Mainstream Application Scenarios

4.1 Tier-1 Issue Resolution and High-Volume Inquiry Handling

AI voice agents are most effective at handling common, repetitive customer inquiries that follow predictable patterns. Password resets, account balance checks, order status updates, delivery tracking, and billing inquiries are all well-suited to full automation. A well-tuned voice agent typically handles half or more of incoming calls fully autonomously, offloading routine work from human agents to higher-value, higher-judgment tasks. According to recent research, many organizations report that AI systems can resolve 30 to 50 percent of routine inquiries without human intervention, dramatically reducing the workload for support teams.

4.2 After-Hours Coverage and 24/7 Availability

Contact centers face a persistent challenge: customers expect support at all hours, but full staffing is cost-prohibitive after peak hours. AI voice agents work 24/7 at the same marginal cost per call, regardless of time. They handle after-hours inquiries, weekend requests, and holiday surges without overtime, shift premiums, or recruitment delays. The median cost per self-service contact is 1.84,comparedto13.50 for assisted contacts. The economics of after-hours coverage shift dramatically with AI voice deployment.

4.3 Scalability for Demand Spikes

Product launches, seasonal peaks, and service disruptions create sudden spikes in support volume that human teams struggle to absorb. Scaling human teams quickly is difficult and expensive. AI voice agents scale almost instantly, handling thousands of simultaneous conversations without requiring additional staff. This makes them particularly valuable for banking, telecommunications, e-commerce, and travel sectors where volume surges are predictable yet unpredictable in magnitude.

4.4 Proactive Outbound Notifications

Voice AI is not only an inbound tool. In outbound scenarios, AI voice agents can make proactive notifications about delays, service disruptions, appointment reminders, payment due dates, and promotional offers. They deliver messages in natural conversation, can handle follow-up questions immediately, and escalate to a human if the interaction becomes too complex. Outbound voice AI extends the utility of the platform beyond the inbound queue into customer engagement and retention.

4.5 Warm Escalation with Full Context

When a voice agent encounters a request beyond its scope, it escalates to a human agent. But unlike IVR transfers that arrive with no context at all, modern AI voice agents perform a warm transfer: the human agent receives the full conversation transcript, the reason for escalation, and any preliminary action already taken. This eliminates blind transfers and prevents customers from starting over. For human agents, working with AI copilots that provide real-time summaries, suggested answers, and context is now standard practice.

4.6 Multilingual and Cross-Border Support

Global customer bases require multilingual support that scales. AI voice agents handle multiple languages and regional dialects, automatically detecting the caller's language and responding appropriately. In 2026, Voice AI increasingly supports real-time language adaptation within a single conversation, allowing a caller to ask a question in one language and receive a response seamlessly in another. This capability is particularly valuable for organizations serving customers across different regions with limited in-house multilingual agent capacity.

5. AI Voice Agent Selection Checklist

With dozens of vendors entering the market, enterprise teams need a structured framework to evaluate platforms beyond marketing demos.

5.1 Evaluation Dimension 1: Accuracy Under Real-World Conditions

Vendor demos are optimized for controlled environments: clean audio, standard accents, scripted intents, and CRM sandboxes that behave nothing like actual customer records. Ask to test the platform in your actual operating conditions. Key questions: How does the ASR perform with regional accents and overlapping speech? What is the WER in real-world noise conditions? Does the LLM handle domain-specific vocabulary and edge cases gracefully? Can the agent maintain coherent context across multi-turn conversations?

5.2 Evaluation Dimension 2: Integration Depth with Business Systems

AI voice agents must connect to the systems where customer data lives. Evaluation criteria include: out-of-the-box connectors with existing CRM, ticketing, and ERP systems; APIs and webhooks for custom integrations; ability to read from and write to backend databases in real time; and support for authentication and user verification workflows. The platform's ability to pull account history, check policies, execute transactions, and update records—all within the conversation—is what distinguishes a demo from a production-ready solution.

5.3 Evaluation Dimension 3: Latency and Uptime

Latency directly determines customer experience. If a pause feels unnatural, callers become frustrated and abandon. Round-trip latency under 1 second is a basic requirement; consistently under 500 milliseconds is exceptional but not yet the industry standard for enterprise scale. Ask vendors for published latency metrics under production load, not in controlled test environments. Uptime guarantees and failover mechanisms for high-availability deployments are also critical, especially for 24/7 operation.

5.4 Evaluation Dimension 4: Security and Compliance

Enterprise voice AI must meet strict security and compliance requirements. Data encryption in transit and at rest is mandatory. Role-based access controls and audit trails ensure governance over who accessed what customer data. For regulated industries, look for SOC2, GDPR compliance, and HIPAA eligibility with signed Business Associate Agreements. Private cloud or on-premises deployment options may be required for high-security sectors. Compliance requirements often constrain architecture choices before you even pick a vendor.

5.5 Evaluation Dimension 5: Total Cost of Ownership and ROI

Traditional per-call or per-agent pricing must be evaluated against total value delivered. The median cost per self-service contact is 1.84 compared to 13.50 for human-assisted contacts. Human agents cost 15to25 per hour fully loaded; voicebot calls typically run from 0.07to0.15 per minute. Teams routinely cut per-call costs by 50 to 70 percent on routine queries when deploying voice AI. Most enterprises see positive ROI within 3 to 6 months, driven by lower per-call costs, eliminated turnover expense, and 24/7 availability without overtime or shift premiums. Gartner projects $80 billion in industry-wide labor cost reduction by 2026 from conversational AI deployments within contact centers, but ROI is not just cost savings: first-contact resolution, average handle time, customer satisfaction scores, agent productivity gains, and revenue outcomes all improve measurably.

6. FAQ

Q1. How much does deploying an AI voice agent typically cost compared to adding human agents?

Traditional agent costs average 15to25 per hour fully loaded, while AI voice agent calls typically cost 0.07to0.15 per minute. Teams routinely cut per-call costs by 50 to 70 percent on routine queries. Most enterprises see positive ROI within 3 to 6 months of deployment.

Q2. Will AI voice agents replace all customer service agents?

No. AI voice agents excel at routine, high-volume tasks such as password resets, order status checks, and billing inquiries. They handle 50 to 70 percent of typical call volume independently. Complex issues requiring empathy, judgment, or nuanced policy interpretation still require human agents. The role of agents shifts from handling volume to handling exceptions, escalations, and high-value interactions.

Q3. Can AI voice agents understand different accents and languages?

Yes. Modern AI voice agents support multiple languages, regional dialects, and domain-specific terminology. Enterprise-grade platforms train speech models on real-world conversational datasets across diverse conditions. Critically, advanced systems can switch languages mid-conversation to support global customer bases.

》》Click to start your free trial of voice chatbot, and experience the advantages firsthand.

The article is original by Udesk, and when reprinted, the source must be indicated:https://www.udeskglobal.com/blog/what-is-an-ai-voice-agent-a-2026-explainer.html

Customer Service& Support Blog

Customer Service& Support Blog